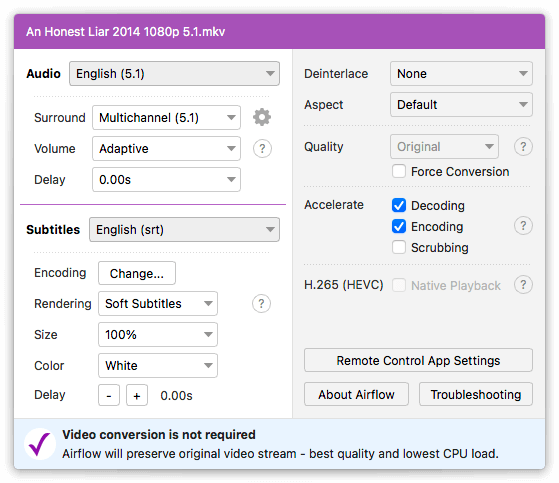

Build your provider package #įirst, we need to build a local version of our provider package with the prepare-provider-packages command. When you run breeze, it will mount that directory and the DAG will be available to Airflow. For testing, however, you’ll need to copy or link your DAG into files/dags. It’s also crucial to have an example DAG so you can make sure your Operator works!įor AWS, example DAGs live in airflow/providers/amazon/aws/example_dags. It’s crucial to provide an example DAG to show folks how to use your new Operator. Now, let’s make sure we have an example DAG to run. breeze tests tests/providers/amazon/aws/sensors/test_emr_containers.py tests/providers/amazon/aws/operators/test_emr_containers.py tests/providers/amazon/aws/hooks/test_emr_containers.py Once in the Airflow shell, you should be able to run any of your unit tests. breeze and be dropped into an Airflow shell after it builds a local version of the necessary Docker images. In any case, you should be able to just type. I had to tweak both of those settings on my mac, but even so I think Breeze is named for the winds that are kicked up by laptop fans everywhere when it starts. □īreeze requires a minimum of 4GB RAM for Docker and 40GB of free disk space. The main challenge I had with Breeze was resource consumption. Luckily(!) Airflow has a cool CI environment known as Breeze that can pretty much do whatever you need to make sure your new plugin is working well! Up and running with Breeze # Airflow is a monorepo - there are many benefits and challenges to this approach, but what it means for us is we have to figure out how to run the whole smorgosboard of this project. The tests are fairly standard unit tests, but what gets challenging is figuring how to actually RUN these tests. Instead, I used the standard mock library to return sample values from the API. EMR on EKS is a fairly recent addition, so it’s unfortunately not part of the mocking library. With the AWS packages, many plugins use the moto library for testing, an AWS service mocking library. Similar to the provider packages, tests for the provider packages live in the tests/providers subtree.

Note that there is no EMRContainerSensor in this workflow - that’s because the default operator handles polling/waiting for the job to complete itself. But if you can keep this diagram in your head, it’s pretty helpful. One thing that was confusing to me during this process is that all three of those files have the same name…so at a glance, it was tough for me to know which component I was editing. I won’t go over the implementation details here, but you can take a look at each file in the Airflow repository. We’ll be creating a new Hook for connecting to the EMR on EKS API, a new Sensor for waiting on jobs to complete, and an Operator that can be used to trigger your EMR on EKS jobs. These provide some good examples of how to create your Operator.įor now, I’m going to create a new emr_containers.py file in each of the hooks, operators, and sensors directories. If you look in each provider directory, you’ll see various directories including hooks, operators, and sensors. Creating your new operator #Īll provider packages live in the airflow/providers subtree of the git repository. The official Airflow docs on Community Providers are also very helpful.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed